|

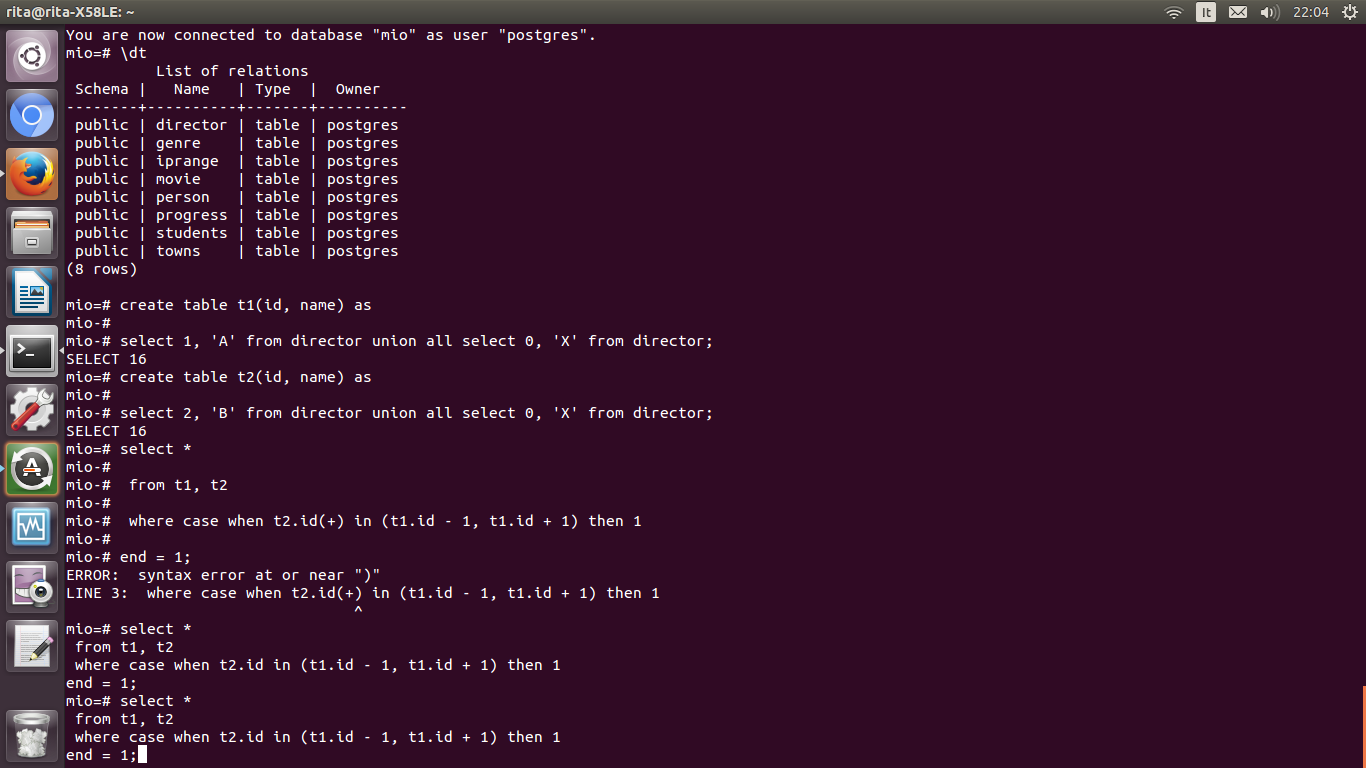

However, they should copy the transaction_upload_mysql.csv file to ensure they don’t disable the equivalent functionality for the MySQL solution space. Since I have students with little expertise in Unix or Linux commands, I must provide a single command that they can use to convert the file with problems to one without problems. A comma terminates each line followed by some indefinite amount of whitespace, which would also raise an extra data after last expected column error.A comma terminates each line, which would raise an extra data after last expected column error.The transaction_upload_mysql.csv file has two critical errors that need to be fixed. If you run it a second time, you insert a duplicate set of values in the target table.Īfter experimenting, its time to fix my student instance. You should take careful note that the copy command is an appending command. The context points to the line number while displaying the text. The error points to the column after the missing column. Moreover, it is able to produce multiple copy statement. It allows to export a csv stored on hdfs. If you want to load postgres from hdfs you might be interested in Sqoop. It is a system level role and therefore doesn’t limit the role to only the videodb database.Īs the postgres user, type the following command to grant the pg_read_server_files role to the system user:ĮRROR: missing data for column "last_name"ĬONTEXT: COPY tester, line 3: "3,Michael Swan" To my knowledge, Spark does not provide a way to use the copy command internally. So, I connected as the postgres superuser and granted the pg_read_server_files role to the student user. Changing the student user to a superuser isn’t really a practical option. The two options for fixing the problem are: Changing the student user to a superuser, and granting the pg_read_server_files role to the student user. psql's \copy command also works for anyone. HINT: Anyone can COPY to stdout or from stdin. Piece of unescaped quotation punctuation or it generates an error.COPY transaction_upload FROM '/u01/app/upload/postgres/transaction_upload_postgres.csv' DELIMITERS ',' CSV ĮRROR: must be superuser or a member of the pg_read_server_files role to COPY from a file PostgreSQL, all open unescaped quotation punctuation must have a matching

Null values, and ensuring it is never quoted. To ensure proper null handling, we recommend specifying a unique string for Quoted null strings will be parsed as nulls, despite being quoted. Would be escaped and would not terminate the data processing. Treated as end-of-data markers despite being quoted. Single-column rows containing quoted end-of-data markers (e.g. Quote characters must immediately follow a delimiter to be treated as More than one layer of escaped quote characters returns the wrong result. CSV formattingĪs described in the CSV Format section of PostgreSQL’s documentation

Details Text formattingĪs described in the Text Format section of PostgreSQL’s documentation. Note that DELIMITER and QUOTE must use distinct values. Specifies that the file contains a header line with the names of each column in the file. Specifies the character to allow instances of the QUOTE character to be parsed literally as part of a column’s value. To include the QUOTE character itself in column, wrap the column’s value in the QUOTE character and prefix all instance of the value you want to literally interpret with the ESCAPE value. Specifies the character to signal a quoted string, which may contain the DELIMITER value (without beginning new columns). Specifies the string that represents a NULL value.

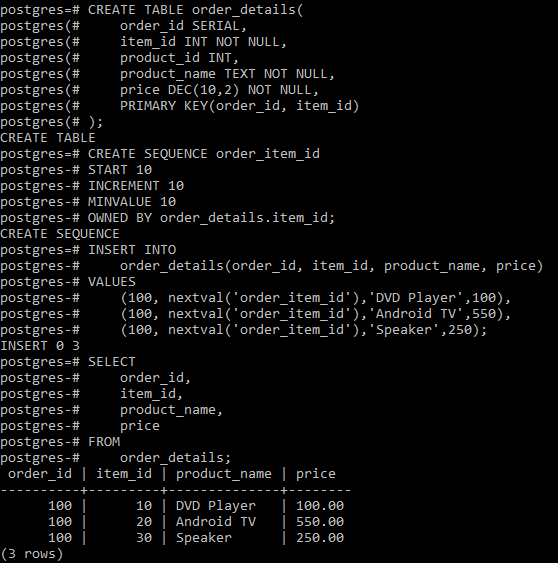

Overrides the format’s default column delimiter. For more information see Text formatting, CSV formatting. postgres CREATE TABLE employee ( postgres( ID int, postgres( name varchar(10), postgres( salary real, postgres( startdate date. The following options are valid within the WITH clause. With a partial column list, all unreferenced columns will receive their default value. Without a column list, all columns must have data provided, and will be referenced using their order in the table. the first column of the row to insert is correlated to the first named column. COPY table_name ( column, ) FROM STDIN WITH ( field val, ) FieldĬorrelate the inserted rows' columns to table_name’s columns by ordinal position, i.e.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed